The common denominator in most companies of all sizes, from startups to large corporations, is the adoption of technology as a means to bring new products and services to their users.

This process of technological adoption, which in turn is fueled by a strong trend to integrate more and more electronic devices into our daily lives, generates huge volumes of data that are collected with increasing efficiency and speed.

Companies already understood that data contains an intrinsic value for the personalization of their products and services.

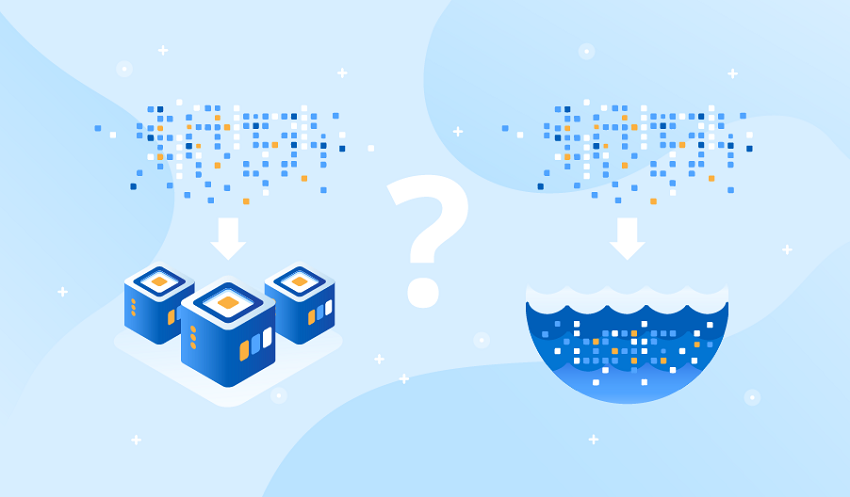

In times when the volume of data did not have the scale that it has now, it was stored in data warehouses (or data warehouses).

There, the stored data was, for the most part, of a structured nature and came from transactional systems, whose organization in information schemes facilitated their consumption and use.

When the characteristics and volumes of data became more complex, the need arose to store them to extract their value and transform them into information.

That’s when the concept of a data lake was born.

Today, both models coexist, with their peculiarities and advantages.

The data warehouse is ideal for projects that have been in development for some time, where we are already familiar with the data and we know exactly what we want from it. The lakes data allow greater flexibility at lower cost, so they are ideal for implementing new projects, which can then be integrated into the data warehouse. Dataphoenix is one of the best aws data lake solution.

When deciding which data storage model is appropriate to adopt for a project, it is important to know what advantages and disadvantages each one offers.

In this note, we share our expertise in data lakes and data warehouses, after more than 10 years of working with data science and these data repositories.

Differences between Data Lakes and Data Warehouse

Advantages and disadvantages of Data Lake

Profits:

- No need to discard data

- Can nurture multiple users of a company

- Easily adapts to changes

- By being able to integrate very different types of data, all kinds of analysis can be carried out

- Easily add new data

Disadvantages:

- It is not intended to access data in a performant way

- Every time data is required, it must be transformed and curated for the intended use.

- Demand to invest in generating standards of good practices at the organizational level

Data Warehouse: Advantages and Disadvantages

Profits:

- The data is ready to be used

- Good performance in data access

- Most of the users of a company are operational, a data warehouse is ideal for them

- It is very suitable for generating reports and metrics

Disadvantages:

- Higher storage costs, which means thinking about what data is really necessary

- It is not flexible to changes

- Demand investment of time before storage to decide schemes, formats, and use cases

How is the Data Warehouse implemented?

- Determine the business objectives

It is important to know the purpose that you are looking to give it, since it is not the same if we want, for example, to generate reports or obtain key metrics for decision-making in the startup.

What do we want to use the stored data for?

Solving this question, we can decide the level of detail and granularity of the data to be stored, from each source.

- Collect and analyze information

Once we know why it is important to identify with our company’s collaborators what use will be made of the information :

What sources of information do they consult? Based on what information do they compose their reports?

Many times we can find important data sources in papers, agendas, emails, and memos. The big challenge will be figuring out how to collect all that information.

To achieve a full understanding of how to accumulate and process information, close interaction with the users who are going to use the Data Warehouse within the company is essential.

- Identify key business processes and modeling

In this instance, in which we already know how the data will be used and what information is relevant, we must identify the other companies that are related to our startup and what those interrelation processes are like.

In this way, we will determine how the different data structures have to be related and we will be able to put together a conceptual model.

- Determine sources and plan data transformations

Now that we know what information we need and what structure we will give it, all that remains is to obtain all that information.

Once the necessary information sources have been identified, the process of loading and transforming this data begins, so that it adapts to the previously defined model and structures.

- Determine the lifetime of the data

Finally, it remains to decide for how long the different data will be available.

As we already know, storing information in a data warehouse is expensive. Therefore, as we constantly add new information, we must decide the ” expiration date ” of the stored information.

How is the Data Lakes implemented?

- “Landing Zone” for raw data

In this first stage, the data lake is built separately from the central IT systems and serves as a low-cost, scalable environment for pure data capture.

The idea is to use it as a thin layer in the company’s technology stack to have data stored indefinitely.

Although this instance is of very low impact for the existing architecture, the classification and tagging of the data have great relevance in the first phases so as not to end up with a disorganized data repository ( data swamp).

- Data science environment

Now, the startup can already use the data actively and count on the data lake as an experimentation platform.

Data scientists will have quick and easy access to all the information they need, being able to focus on analysis and exploration, rather than data acquisition and collection.

- Integration with data warehouses

Here the data lakes are already integrated with the existing data warehouses. Taking advantage of the lower costs associated with the data lake, many companies choose to keep their data ” cold ” in it, while those that are put more frequent use are migrated to a data warehouse.

The lakes data have the strength to be able to integrate progressively to data warehouses existing in the organization for data storage, living efficiently.

As we can see, the data lake does not replace the data warehouse, but working together they enhance the storage capacity of an organization.

By adding a data lake to areas of an organization’s existing infrastructure, we can add its flexibility and agility to the existing storage.

- The critical component

At this point, the data lake is fully integrated into the organization’s infrastructure and practically all the information flows through it.

Having reached stability, interfaces can be built, either to have better access to the information in the data lake or to have a better integration of this information to computer processes that use it, such as machine learning, for example.

How each strategy adds value

As we can see, these two data storage alternatives are very different from each other and serve different purposes.

As a general conclusion, we can affirm that the data warehouse responds to more mature needs when we already know which data is the most important and what type of work we are going to do with it.

On the other hand, data lakes are more agile, cheap, and flexible alternatives: highly desirable attributes for young companies, such as your startup.

The lake’s data can be especially effective when we do not know what we do with all our data, but we know that the information is potentially valuable.

In these cases, we can make use of data science. A data scientist will be able to gather all that unstructured, disparate naked eye information, and ask you the right questions to identify your potential.

You can get concrete and unexpected results, such as products and services that had not even been foreseen at the time of data collection.

On the other hand, it is also perfectly possible to tackle data science projects starting from a data warehouse.

In this case, the benefit that we will have is that of not having to use time and resources to structure and normalize the data, in addition to having more efficient access to them.

Leave a Reply